The Year-End Moves No One’s Watching

Markets don’t wait — and year-end waits even less.

In the final stretch, money rotates, funds window-dress, tax-loss selling meets bottom-fishing, and “Santa Rally” chatter turns into real tape. Most people notice after the move.

Elite Trade Club is your morning shortcut: a curated selection of the setups that still matter this year — the headlines that move stocks, catalysts on deck, and where smart money is positioning before New Year’s. One read. Five minutes. Actionable clarity.

If you want to start 2026 from a stronger spot, finish 2025 prepared. Join 200K+ traders who open our premarket briefing, place their plan, and let the open come to them.

By joining, you’ll receive Elite Trade Club emails and select partner insights. See Privacy Policy.

|

ResearchAudio.io 16,896 Cores. One Chip. Why CPUs Never Stood a Chance.Inside the memory hierarchy that powers every AI model you use. |

|

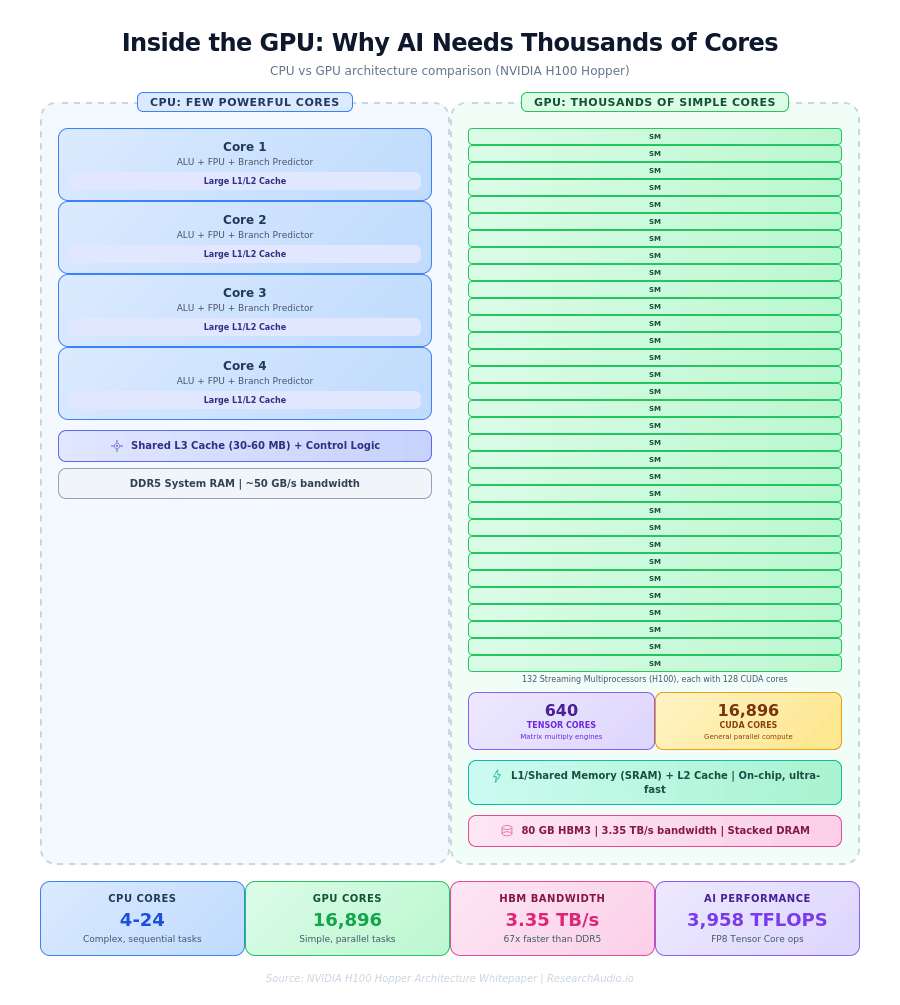

A single NVIDIA H100 GPU contains 16,896 CUDA cores and 640 Tensor Cores, delivering 3,958 teraflops of FP8 performance. A top-end server CPU has 24 cores. That 700:1 ratio is not a bug in the design. It is the entire reason AI works at the speed it does. Training GPT-4 required approximately 2.15 x 1025 floating point operations. Every single one of those operations was a matrix multiply, and matrix multiplies are exactly the kind of task that splits into thousands of independent pieces. This is the story of why GPUs exist, how they work under the hood, and why every dollar spent on AI infrastructure flows through a memory hierarchy most engineers never think about. |

What Is a GPU, Really

A GPU (Graphics Processing Unit) started life rendering pixels for video games. Every frame on screen requires the same math applied independently to millions of pixels: calculate color, apply lighting, determine depth. The hardware that evolved to do this, thousands of tiny processors all running the same instruction on different data, turned out to be the ideal architecture for neural networks.

A CPU (Central Processing Unit) is built for versatility. It has a few powerful cores (4 to 24 in typical server chips), each packed with branch predictors, out-of-order execution units, and large private caches. CPUs handle complex sequential logic well: running an operating system, parsing JSON, managing network connections.

A GPU trades all of that complexity for raw parallel throughput. Instead of 24 sophisticated cores, NVIDIA's H100 packs 132 Streaming Multiprocessors (SMs), each containing 128 CUDA cores. These cores are simple: they execute a fused multiply-add (FMA) operation, computing A x B + C in one clock cycle. But when 16,896 of them run simultaneously, the aggregate throughput is staggering.

Why AI Runs on GPUs, Not CPUs

Neural network training boils down to one operation repeated trillions of times: matrix multiplication. When you multiply two matrices, every element in the output can be computed independently. A 1000x1000 matrix multiply produces 1,000,000 output values, each needing its own dot product. A CPU computes these one at a time (or a few at a time with SIMD). A GPU computes thousands simultaneously.

Think of it this way: a CPU is a single expert mathematician who can solve any equation very fast. A GPU is a stadium full of 16,000 students who each know basic arithmetic. If the task is "solve one differential equation," the expert wins. If the task is "multiply these two giant matrices," the stadium wins by orders of magnitude.

Three things make GPUs indispensable for AI. First, the math fits: the transformer architecture (which powers GPT, Claude, Gemini, and every major language model) relies on dense matrix operations in its attention mechanism and feed-forward layers. Second, GPUs are programmable through software (NVIDIA's CUDA platform has been compounding as an advantage for over 15 years, with more than 30 million downloads and 3 million developers). Third, Tensor Cores accelerate matrix multiply-accumulate operations at hardware speed, delivering up to 6x more throughput than standard CUDA cores for AI workloads.

|

Key Insight: CPUs dedicate most of their transistor budget to control logic (branch prediction, out-of-order execution, speculative fetching). GPUs spend that budget on more compute units instead. In the H100, approximately 50,000 transistors per CUDA core handle the actual FMA unit, while the rest enable scheduling across thousands of threads. |

The Moving Parts Inside a GPU

A modern data-center GPU contains several distinct subsystems, each critical to performance.

Streaming Multiprocessors (SMs) are the fundamental compute blocks. The H100 has 132 of them, organized into 8 Graphics Processing Clusters (GPCs). Each SM contains 128 FP32 CUDA cores, 4 fourth-generation Tensor Cores, warp schedulers, and special function units for operations like square root and trigonometry. An SM can host up to 2,048 concurrent threads organized into groups of 32 called warps. A warp scheduler issues one instruction per cycle, but the SM can issue instructions from four warps simultaneously.

CUDA Cores are the workhorse arithmetic units. Each one executes a fused multiply-add per clock cycle at the GPU's boost clock. At 1.8 GHz, 16,896 cores produce approximately 67 teraflops of FP32 throughput.

Tensor Cores are the AI-specific hardware. Unlike CUDA cores that operate on individual numbers, Tensor Cores perform matrix multiply-accumulate on small matrix tiles (for example, 4x4 or 8x4 blocks) in a single operation. The H100's 640 Tensor Cores support FP8, FP16, BFloat16, TF32, and FP64 precision formats. At FP8, they deliver 3,958 teraflops, roughly 60x the throughput of the CUDA cores alone.

Warp Schedulers manage thread execution. A GPU does not run one thread at a time like a CPU. It runs thousands concurrently, switching between warps to hide memory latency. When one warp is waiting for data from memory, the scheduler immediately switches to another warp that has its data ready. This latency-hiding trick is what allows GPUs to maintain high utilization even when memory access is slow.

The Memory Hierarchy: SRAM, HBM, and the Memory Wall

The GPU's compute cores are fast, but they process data no faster than memory delivers it. This is where the memory hierarchy becomes critical, and where most of the engineering complexity lives.

|

|

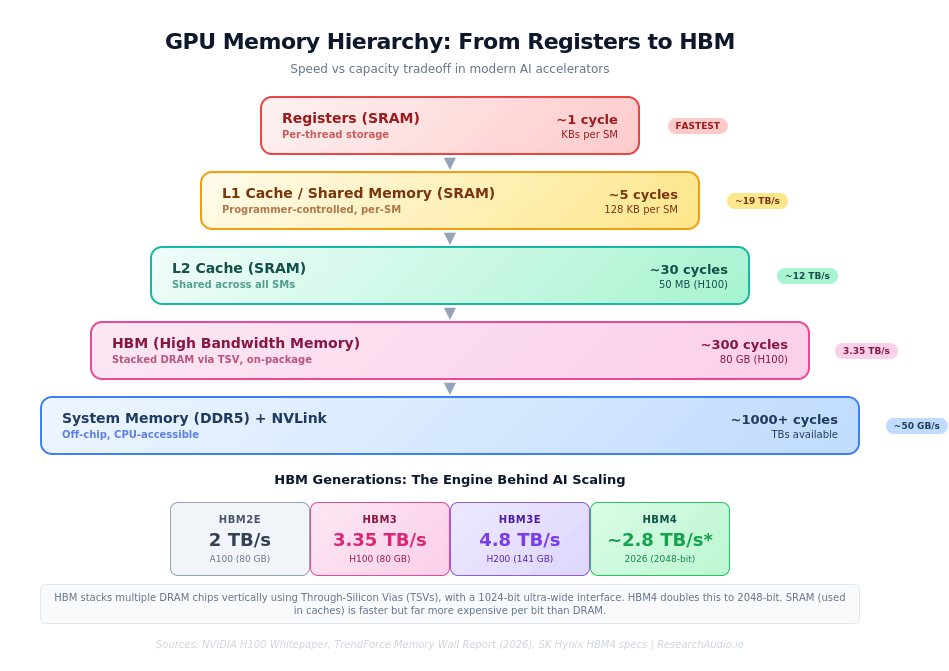

SRAM (Static RAM) is the fastest memory on the chip. It uses six transistors per bit to store data, which makes it expensive but extremely fast, requiring no refresh cycles. SRAM appears in three forms on a GPU: registers (the fastest, measured in KBs per SM), L1 cache and shared memory (128 KB per SM on the H100, programmer-controllable), and the L2 cache (50 MB shared across all SMs). Accessing L1 takes about 5 clock cycles. L2 takes about 30 cycles.

HBM (High Bandwidth Memory) is the GPU's main memory. Unlike traditional DRAM that sits on a separate board, HBM stacks multiple DRAM layers vertically using Through-Silicon Vias (TSVs), tiny copper pillars drilled through the silicon itself. These stacks connect to the GPU die through a silicon interposer, creating a 1024-bit ultra-wide memory interface. The result: the H100's 80 GB of HBM3 delivers 3.35 TB/s of bandwidth, roughly 67 times the bandwidth of DDR5 system memory.

But even 3.35 TB/s is not enough. When a large language model runs on a GPU, its weights live in HBM. During inference, each generated token requires reading and processing those weights. During training, gradients, optimizer states, and activations all compete for memory bandwidth. This bottleneck, where compute grows faster than memory can feed it, is called the memory wall. TrendForce reports that GPU computational power has grown much faster than memory bandwidth and data transfer efficiency in recent years, making memory the primary constraint on AI performance.

|

Key Insight: The difference between SRAM and HBM is not just speed: it is architectural. SRAM uses 6 transistors per bit and never needs refreshing. DRAM (which HBM is built from) uses 1 transistor and 1 capacitor per bit but must be refreshed thousands of times per second. This is why SRAM is used for caches (small, fast) and DRAM for main memory (large, slower). The entire art of GPU programming is minimizing how often data must travel from HBM to the compute cores. |

HBM Generations: The AI Memory Arms Race

|

2 TB/s

HBM2e (A100)

|

3.35 TB/s

HBM3 (H100)

|

4.8 TB/s

HBM3e (H200)

|

~2.8 TB/s*

HBM4 (2026)

|

*HBM4 targets 2.8 TB/s per stack at 11 Gbps with 2048-bit interface width

Each generation increases both capacity and bandwidth. The H100 uses 80 GB of HBM3. The H200 jumped to 141 GB of HBM3e. NVIDIA's upcoming Blackwell Ultra (GB300) targets 288 GB of HBM3e, and the Rubin Ultra architecture aims for 512 GB using HBM4. According to Micron's fiscal Q1 2026 earnings, HBM capacity is sold out through calendar year 2026, with demand projected to grow over 130% year-over-year in 2025 according to TrendForce.

HBM4, expected to enter mass production in 2026, doubles the memory interface width from 1024-bit to 2048-bit. SK Hynix and Samsung have both delivered paid final HBM4 samples to NVIDIA. The new standard also introduces a logic base die, which allows compute capabilities to be integrated directly into the memory stack itself.

|

Key Insight: The progression from H100 (80 GB) to Rubin Ultra (512 GB) means each accelerator consumes 6.4x more HBM. This explains why memory manufacturers are reporting record margins above 50% and why gaming GPU production faces 40% cuts: the same DRAM wafers are being redirected to HBM stacks for AI chips. |

CUDA: The Software Moat

Hardware alone does not explain NVIDIA's dominance. CUDA (Compute Unified Device Architecture) is NVIDIA's parallel computing platform, released over 15 years ago. When you call model.cuda() in PyTorch, CUDA allocates memory on the GPU, copies data from CPU to GPU over the PCIe bus, launches parallel kernels across thousands of threads, and manages the results.

CUDA's ecosystem includes over 450 SDKs, libraries like cuBLAS (matrix operations), cuDNN (deep learning primitives), and TensorRT (inference optimization). Every major ML framework, from PyTorch to JAX, compiles down to CUDA kernels. For a competitor to displace NVIDIA, building a better chip is just half the challenge. The other half is replicating a software ecosystem that 3 million developers depend on. AMD's ROCm platform is the leading alternative but currently has less maturity and ecosystem support.

Why This Matters for Practitioners

Understanding GPU architecture changes how you write and deploy AI systems. Techniques like FlashAttention achieve their speedups specifically by minimizing data movement between HBM and SRAM, computing attention in tiles that fit in shared memory rather than materializing the full attention matrix in HBM. Mixed-precision training (using FP8 or FP16 instead of FP32) halves or quarters memory requirements, allowing larger batch sizes that keep more CUDA cores busy. Model parallelism strategies (tensor parallel, pipeline parallel, expert parallel) are fundamentally decisions about how to split data across the memory hierarchy of multiple GPUs connected via NVLink.

|

Key Insight: The single most impactful optimization in modern AI is not algorithmic. It is reducing how often data travels between HBM and the compute cores. FlashAttention, quantization, kernel fusion, and operator tiling all target the same bottleneck: the memory wall. If you understand the GPU memory hierarchy, you understand why these techniques work. |

The AI industry spent over $210 billion on data center infrastructure in 2024, with a large portion flowing to NVIDIA GPUs. Meta planned to deploy 350,000 H100s. xAI built a cluster of 100,000. The semiconductor memory market grew 78% in 2024 to $170 billion. All of this spending comes down to a single architectural fact: neural networks are matrix multiplies, and GPUs are matrix multiply machines. The bottleneck is no longer compute. It is memory bandwidth, and every generation of HBM is a bet that feeding the cores faster is worth more than adding more cores.

|

ResearchAudio.io Sources: NVIDIA Hopper Architecture Whitepaper, TrendForce Memory Wall Report, Inside NVIDIA GPUs (Aleksa Gordic), Introl AI Memory Supercycle |