|

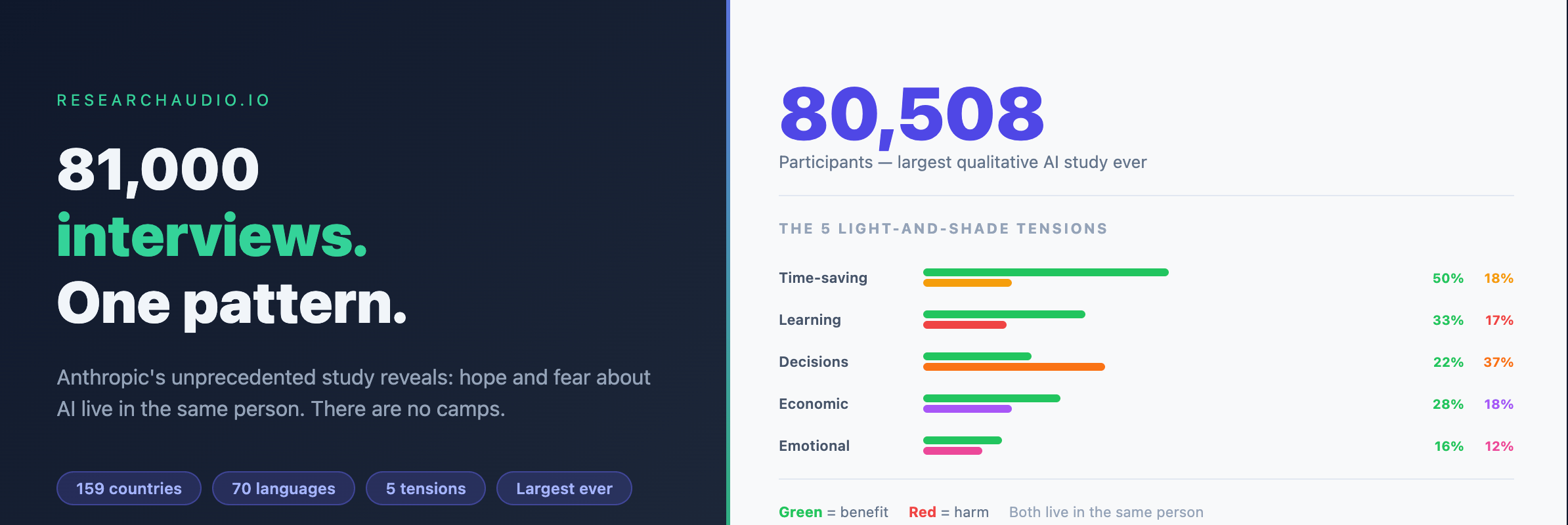

Last December, Anthropic invited every Claude.ai user to sit down with an AI interviewer. Not a survey. Not a multiple-choice form. A real conversation about hopes, fears, and the role AI plays in their lives. 80,508 people responded, across 159 countries and 70 languages. Anthropic believes it is the largest and most multilingual qualitative study ever conducted.

What they found was not what public discourse about AI would predict. There were no clean camps of optimists and pessimists. Instead, there was a more human and more complicated picture: people simultaneously holding hope and fear about the same thing.

|

80,508

Participants

|

159

Countries

|

70

Languages

|

67%

Positive Sentiment

|

How the Study Worked

Anthropic used a version of Claude (called Anthropic Interviewer) to conduct structured conversations with each participant. The interviewer asked a fixed set of questions: what people want from AI, whether they are getting it, and what they fear might go wrong. Follow-up questions adapted to each person's responses.

After collecting 80,508 interviews, the team built Claude-powered classifiers to categorize each conversation across multiple dimensions. "What people want from AI" was assigned a single primary category. Concerns used a multi-label approach, since respondents typically raised 2.3 distinct worries on average.

|

CLAUDE.AI USERS

One-week invite

|

→ |

AI INTERVIEWER

Adaptive convos

|

→ |

AI CLASSIFIERS

Multi-label codes

|

→ |

5 TENSIONS

Analyzed

|

Study methodology: AI-powered interviews at qualitative scale. Source: Anthropic (2026)

|

What 80,508 People Want From AI

The top aspiration, cited by 18.8% of respondents, was professional excellence: wanting AI to handle routine tasks so they can focus on higher-value work. But when the interviewer asked what realizing that vision would actually enable, a different picture emerged. Many people talking about productivity were really talking about getting time back for their families.

|

WHAT PEOPLE WANT FROM AI (primary category, % of respondents)

| Professional excellence |

|

18.8% |

| Personal transformation |

|

13.7% |

| Life management |

|

13.5% |

| Time freedom |

|

11.1% |

| Financial independence |

|

9.7% |

| Entrepreneurship |

|

8.7% |

| Learning and growth |

|

8.4% |

Classified from open-ended answers. 1% did not articulate a vision. Source: Anthropic (2026)

|

|

Key Insight: When people said they wanted AI for productivity, many were expressing something deeper: wanting to cook dinner with their mother, or leave work on time to pick up their children. The underlying desire was for more life outside work, not better work itself.

|

Has AI Delivered?

When asked if AI had ever taken a step toward their stated vision, 81% said yes. The most commonly cited area was productivity (32%), primarily technical acceleration: developers cutting 173-day processes down to 3 days. Another 17% cited cognitive partnership (AI as thinking collaborator), and nearly 10% pointed to learning.

The 8.7% who cited technical accessibility described something different from speed gains. These were people who had never previously had access to technology skills: a mute user in Ukraine who built a text-to-speech communication tool; a butcher in Chile who had only touched a PC two or three times before AI helped him launch a business. For them, AI was not a productivity multiplier. It was a barrier remover.

Emotional support appeared in only 6.1% of responses, but these were among the most striking accounts collected: soldiers in Ukraine using AI during shelling; a bereaved woman using Claude as the only patient listener available after her mother died; a student in India overcoming a lifelong phobia of mathematics.

The 5 Light-and-Shade Tensions

The central finding of the study is what Anthropic calls "light and shade": the same AI capabilities that produce benefits also produce harms. These are not separate groups of optimists and pessimists. They are tensions within each person. Someone who values emotional support from AI is three times more likely to also fear becoming dependent on it.

|

THE 5 LIGHT-AND-SHADE TENSIONS (% who mentioned each side)

|

LIGHT (benefit) |

|

SHADE (harm) |

| Learning vs Atrophy |

|

⇄ |

|

| Decisions vs Unreliability |

|

⇄ |

|

| Emotional support vs Dependency |

|

⇄ |

|

| Time-saving vs Illusory gain |

|

⇄ |

|

| Economic empowerment vs Displacement |

|

⇄ |

|

Green = benefit side. Red = harm side. Source: Anthropic (2026)

|

The only tension in which the negative side overshadowed the positive was better decision-making vs. unreliability. 22% reported AI improving their decisions, but 37% raised concerns about its unreliability. Crucially, both sides were rooted in direct experience: 88% of those excited about decision-making had seen it work firsthand, and 79% of those worried about unreliability had been burned directly. Lawyers report the highest rates of both.

The most entangled tension was emotional support vs. dependence. It had the strongest co-occurrence: people excited about emotional support from AI were three times more likely than average to also worry about becoming dependent on it. A graduate student described telling Claude things she could not tell her partner, adding: "It felt like I was having an emotional affair."

|

Key Insight: The most speculative concerns (economic displacement, cognitive atrophy) were also the most systemic. The most experience-grounded concerns (unreliability, emotional dependence) were the most personal. Pattern: the closer the impact, the more people spoke from direct observation.

|

How Perspectives Vary by Region

No country had AI sentiment below 60% positive. But the range was meaningful. Sub-Saharan Africa (24% negative sentiment), Latin America (26% negative), and South Asia (31% negative) sat at the optimistic end. Western Europe (36% negative), North America (35% negative), and Oceania (36% negative) were the most skeptical.

|

REGIONAL AI SENTIMENT: % NEGATIVE (lower = more positive about AI)

| Sub-Saharan Africa |

|

24% |

| Latin America |

|

26% |

| South Asia |

|

31% |

| East Asia |

|

35% |

| North America |

|

35% |

| Western Europe |

|

36% |

Concern about jobs and economy was the strongest predictor of negative AI sentiment. Source: Anthropic (2026)

|

The regional divide in AI visions was equally sharp. Wealthier regions wanted AI to manage cognitive overload and life complexity. Developing regions wanted AI as an entrepreneurial springboard. Entrepreneurship as an AI vision resonated most in Africa, South and Central Asia, and Latin America, where users described AI as a capital bypass mechanism: a way to start businesses without the funding or infrastructure otherwise required.

|

Key Insight: Concern about jobs was the single strongest predictor of overall AI sentiment globally. Regions where AI displacement felt concrete (wealthier, more AI-exposed markets) were consistently more negative. Regions where economic disruption already existed were more focused on AI as opportunity.

|

The Deepest Finding

Across all five tensions, a single pattern ran: the more personal and immediate the impact, the more likely people were speaking from experience. The more systemic and long-term the impact, the more speculative the concern. People have already experienced AI's ability to save time and improve decisions. They have not yet fully experienced its economic or cognitive long-term effects.

The unresolved question this study leaves open is whether "beneficial AI" means filling the gaps in broken human systems, or whether it means building new ones. The soldier in Ukraine who used AI during shelling, the bereaved woman with no human listener, and the mute Ukrainian who built his own communication tool: these are real gains. They are also signs of systems that were not there when they were needed. The usefulness is real. The question is what we are asking it to cover.

|