Dictate code. Ship faster.

Wispr Flow understands code syntax, technical terms, and developer jargon. Say async/await, useEffect, or try/catch and get exactly what you said. No hallucinated syntax. No broken logic.

Flow works system-wide in Cursor, VS Code, Windsurf, and every IDE. Dictate code comments, write documentation, create PRs, and give coding agents detailed context- all by talking instead of typing.

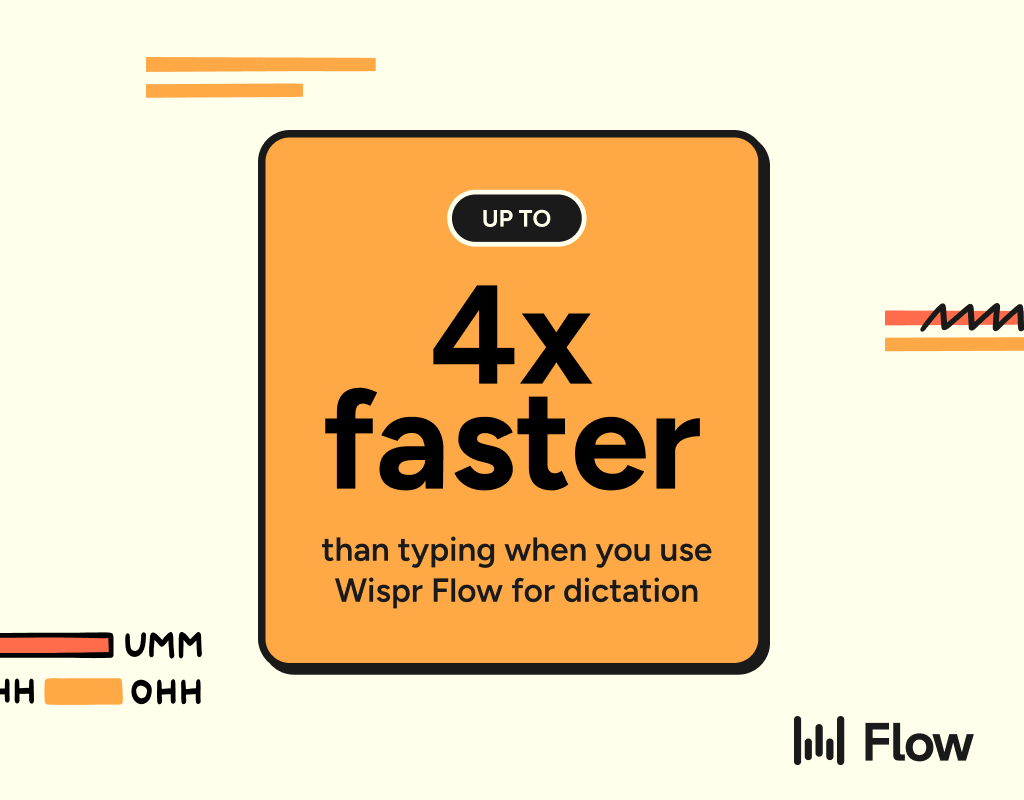

89% of messages sent with zero edits. 4x faster than typing. Millions of developers use Flow worldwide, including teams at OpenAI, Vercel, and Clay.

Available on Mac, Windows, iPhone, and now Android - free and unlimited on Android during launch.

|

ResearchAudio.io Anthropic Refused the Pentagon. Then Published Why.Claude runs classified ops. The Pentagon wants it without guardrails. |

|

Anthropic, the company behind Claude, just published its most consequential statement to date. In a public letter from CEO Dario Amodei, Anthropic drew two hard lines with the Department of War (the renamed Department of Defense): no mass domestic surveillance, and no fully autonomous weapons. The Pentagon's response was an ultimatum with a Friday 5:01 PM deadline, threats to invoke the Defense Production Act, and a promise to label Anthropic a "supply chain risk," a designation normally reserved for foreign adversaries. Anthropic's answer: "These threats do not change our position." Why This MattersThis is not a hypothetical policy debate. Anthropic holds a $200 million Pentagon contract signed last July alongside Google, OpenAI, and xAI. Claude is currently the only frontier AI model cleared for use in classified military settings. One defense official told Axios: "The only reason we're still talking to these people is we need them and we need them now. The problem for these guys is they are that good." The other three contract holders (OpenAI, Google, xAI) have agreed to the Pentagon's "any lawful use" terms. Anthropic is the only one drawing boundaries, and the only one currently operating inside classified networks. |

🚫 No mass domestic surveillance

🚫 No fully autonomous weapons

|

Track Record ✓ First on classified networks |

⚔

|

Pentagon Threats ⚠ Cancel $200M contract ⚠ "Supply chain risk" label ⚠ Defense Production Act |

|

|

Source: Anthropic public statement (Feb 26, 2026), Axios, NPR, CNN, Fortune reporting

What Anthropic Will and Won't Do

Amodei's statement is carefully calibrated. It opens by affirming Anthropic's commitment to national defense in the strongest terms: "I believe deeply in the existential importance of using AI to defend the United States and other democracies, and to defeat our autocratic adversaries." This is not a company running from military work.

Anthropic supports intelligence analysis, modeling and simulation, operational planning, and cyber operations. It supports partially autonomous weapons like those deployed in Ukraine. It even acknowledges that fully autonomous weapons "may prove critical" in the future. The objection is narrower than it first appears: today's frontier AI systems are not reliable enough to power weapons that remove humans from the targeting loop entirely. Anthropic offered to collaborate on R&D to improve this reliability. The Department of War declined.

On surveillance, the distinction is equally precise. Anthropic supports lawful foreign intelligence and counterintelligence. What it rejects is mass domestic surveillance, where AI can assemble scattered, individually innocuous data (movement records, browsing history, associations) into comprehensive profiles of American citizens at scale. Amodei cites the Intelligence Community's own acknowledgement that current data purchasing practices raise privacy concerns.

The Contradiction at the Core

The Pentagon's two threats work against each other, and both sides know it. Designating Anthropic a "supply chain risk" would require every Pentagon vendor and contractor to certify they do not use Claude. Invoking the Defense Production Act would compel Anthropic to provide Claude to the military. As Dean Ball, a former Trump administration AI adviser, told Politico: you cannot simultaneously tell every DoD supplier to stop using Anthropic's models while also demanding that the DoD must use those same models.

Legal experts have also questioned whether the DPA even applies here. Technology lawyer Katie Sweeten (a former DOJ official) noted that the DPA is typically used to compel production of commercially available goods during emergencies, not to strip safety guardrails from custom-built government software. Anthropic could challenge any DPA invocation in court.

The Broader Landscape

Anthropic stands alone among its peers on this issue. OpenAI, Google, and Elon Musk's xAI have all agreed to the Pentagon's "any lawful use" terms. xAI was approved for classified settings just this week. White House AI czar David Sacks has publicly attacked Anthropic as "woke AI" and the "doomer industrial complex," accusing the company of using safety concerns as a regulatory capture strategy.

The financial stakes are not trivial but also not existential for Anthropic. The company recently closed a $30 billion funding round at a $380 billion valuation and has over 500 enterprise customers spending more than $1 million annually. The $200 million Pentagon contract matters, but losing it would not sink the company. What it could do is eviscerate Anthropic's reputation in the government and defense contracting ecosystem, particularly if the "supply chain risk" designation sticks. Fortune reported Anthropic is contemplating an IPO as early as next year.

|

Key Insight #1: Anthropic's red lines are narrower than they appear. The company supports intelligence analysis, cyber ops, operational planning, and even partially autonomous weapons. The two exceptions (mass domestic surveillance and fully autonomous targeting without human oversight) have, by Anthropic's account, never actually blocked a Pentagon use case to date. |

|

Key Insight #2: The Pentagon's leverage is weaker than its rhetoric suggests. Claude is the only frontier model currently operating in classified environments. Cutting Anthropic off would require finding and validating a replacement for active military planning, intelligence, and cyber operations, all while maintaining operational continuity. |

|

Key Insight #3: This fight sets the precedent for who controls AI deployment in government: the companies that build models, or the agencies that use them. Every other frontier lab has deferred to the Pentagon. If Anthropic holds its position and survives the fallout, it creates a template for principled refusal. If it folds, the question is settled for a generation. |

The Friday 5:01 PM deadline has arrived, and Amodei's public statement is the answer. The most consequential question in AI governance right now is not whether models can be made safe enough for military use. It is whether the people who build these systems retain any say in how they are deployed once the contract is signed.

|

ResearchAudio.io Sources: Anthropic Statement (Feb 26, 2026) · Axios · NPR · CNN · Fortune |