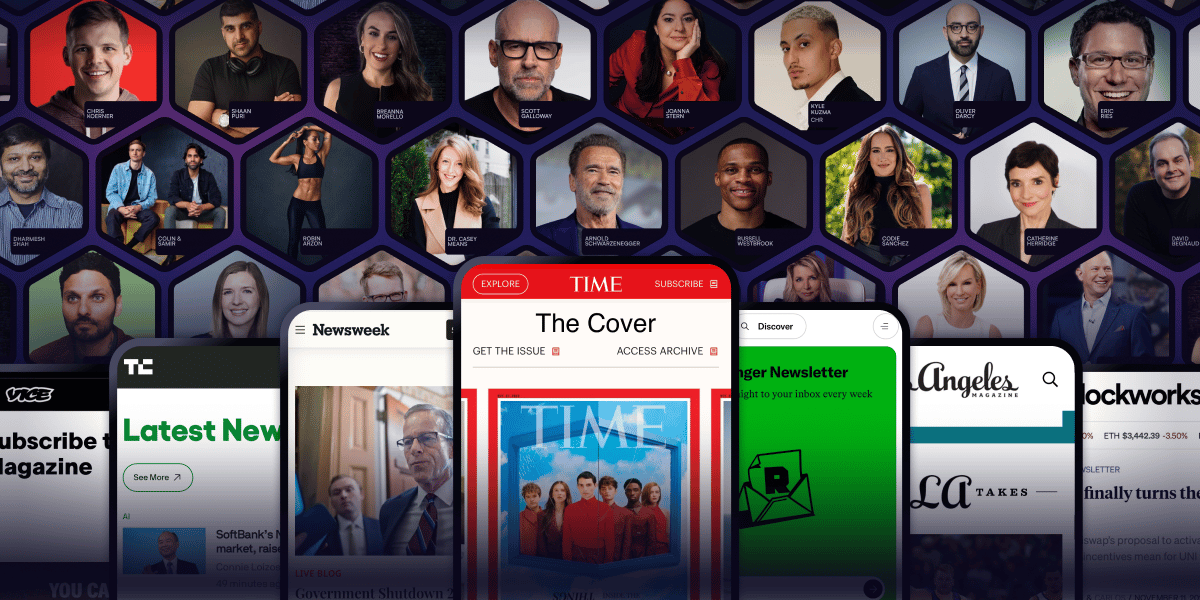

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.

|

ResearchAudio.io

YC's CEO Shipped 600,000 Lines of AI Code in 60 Days

I reproduced his stack. Here's the honest breakdown.

|

|

600K

raw lines, 60 days

|

4.5h

hidden debug tax

|

50K

line coherence cliff

|

Last month, Garry Tan tweeted a screenshot. His GitHub contribution graph, sixty days, nearly every square dark green. The headline number: 600,000 lines added.

The context: Tan runs Y Combinator full-time. Board meetings, batch reviews, crisis comms. And somehow he generated more code than most startups ship in a year. The tool: Claude Code, running inside a workflow he calls gstack.

One caveat before we go further. Tan publishes a normalized number too, in the README under "On the LOC Controversy." It strips AI inflation and lands at 11,417 logical lines per day, an 810x lift over his 2013 baseline. The 600K is real. So is the asterisk. Both can be true.

The reactions split the internet. Skeptics: "Show me the diffs. 600K of what, generated noise?" Believers: "One person with AI is a 20-person engineering team."

I spent 48 hours running gstack against a real production service to find out who was right. Neither side was. The truth is more interesting, and more threatening.

My testbed was not a toy. A production-grade Node.js service: 15,000 lines, PostgreSQL, Redis, OAuth, Stripe billing, real unit tests, enough traffic to know immediately if something broke.

I gave myself a real feature: a multi-tenant audit log system with streaming exports, webhook delivery, and row-level security. The kind of thing a senior engineer takes 2 to 3 focused days to scaffold, test, and ship if they are good. I wanted to see how close gstack could get.

There is a popular misconception that Tan is "vibe coding," waving his hands at Claude and merging to main. That is not what is happening. gstack has four rigid phases, and the AI is only the engine in one of them. The frame is everything.

|

Phase 1 · Prompt Protocol

Define the API contract, data model, test matrix, and integration boundaries before any code generates. The work nobody screenshots.

|

Phase 2 · Generate

Claude Code reads existing fixtures, generates controllers, services, migrations, tests, OpenAPI docs. Reruns until coverage clears the threshold.

|

|

Phase 3 · The Gate

Tan reviews every PR manually. The AI fills the frame. The human still holds it. This phase takes roughly as long as Phase 2.

|

Phase 4 · Modularize

Split work into micro-repos. The AI operates on one module at a time. Avoids the coherence cliff. Adds cross-module friction.

|

I tracked everything. Solo human baseline (me, no AI) on the left, gstack on the right. The audit-log feature, identical scope, both runs.

|

What the table actually says

Net win: 9 hours vs 14. Real, measurable. But not the 20x the headline implies. The hidden 4.5 hours of debugging AI output is the cost nobody mentions, and the three production bugs are the cost nobody wants to.

|

Boilerplate annihilation. Generating the skeleton of a feature, routes, validation, migrations, tests, docs, is genuinely 10 to 40 times faster than by hand. If you know what you are building, the AI turns a whiteboard sketch into a working module before your coffee cools.

Tests as default. Most engineers hate writing them. gstack makes them unavoidable, and because the AI generates them alongside the code, they are coherent with the implementation in a way hand-written tests often are not. I found 8 edge cases I would have missed.

Refactor propagation. Change a column name, regenerate dependents. What used to be 20 minutes of grep-and-replace is a 60-second prompt. Removing that friction is a real shift in what feels worth doing.

Solo-founder velocity. One person can scaffold an entire product surface, backend, frontend, auth, billing, admin, in a week. The bottleneck is no longer typing speed. It is product taste.

At around 50K lines, Claude Code loses the thread. It starts duplicating utility functions with slightly different names. It uses stale data models from earlier in the conversation. It deletes working code to "simplify" something it did not fully understand. It renames variables to things like userDataItemFinalV2 because it cannot track scope.

Tan's fix is aggressive modularization. That works, but modularizing is an architectural choice with real cost. It is not a free lunch.

AI-generated code has a higher deletion rate than human-written code because it over-solves. It writes abstractions for cases that will never occur. It adds flags nobody asked for.

After two days of gstacking, my codebase had three pagination strategies (cursor, offset, hybrid), two caching layers that invalidated each other, and an API naming convention that switched from kebab-case to camelCase halfway through. Everything worked. Nothing was consistent.

The real engineering skill in a gstack world is not generation. It is curation. Deleting faster than the machine creates.

A typical feature run through gstack consumes 200,000 to 400,000 tokens, costing 0.20 to 2.00 dollars depending on model and complexity (Dench breakdown, March 2026). My audit-log run averaged 350K on Sonnet 4.

The /ship skill specifically draws fire on the repo. Issue #949: "The token consumption of creating a PR is twice the token cost of the feature implementation itself." Filed by a user, still open.

The "10 to 15 parallel sprints" claim assumes a budget my Anthropic spend cap does not have approved.

The future engineer is not a typist. The future engineer is an editor with a strong bias for deletion.

|

Junior engineers: the risk is atrophy, not replacement

If you let AI write the frame, not just fill it, you will never develop the architectural intuition that makes seniors valuable. You will ship faster than your cohort and plateau earlier. Use gstack for 80 percent. For the remaining 20, write every line by hand from scratch with no AI. The friction is the lesson.

|

|

Senior engineers: bad taste is now 10x faster

Good taste is still human. The senior role shifts from writing the code to guarding the frame. You are no longer the fastest typist on the team. You are the last line of defense against architectural incoherence.

|

|

Hiring managers: stop testing typing speed

Stop testing LeetCode. Start testing: can this person define the frame, can they catch security holes that pass tests, can they delete faster than the AI generates. The best engineer in 2027 is the one who writes the 500 lines that make the other 36,500 unnecessary.

|

|

The metric nobody is publishing

Everybody is publishing lines shipped, commits per day, velocity dashboards. Nobody is publishing lines deleted, bugs at 6 months, mean time to repair. The bottleneck is not output. It is coherence over time. Lines are vanity. Coherence is sanity.

|

We are in the awkward middle phase of AI engineering. The tools are good enough to be dangerous. They are not good enough to be trusted. The engineers who win the next two years will be the ones who understand this gap intimately.

AI is not replacing engineers. It is replacing the part of engineering that was always mechanical: the typing, the scaffolding, the test-writing. It is expanding the surface area of what one person can build, and creating a new failure mode at the same time. Systems that are locally correct and globally doomed.

The 48-hour move: install gstack, run /office-hours, /plan-eng-review, and /qa on your current side project. Skip the rest. Track your token spend per feature against your previous baseline. Decide on data, not vibes.

|

■ Reader challenge

If your team is shipping AI-generated code at production scale, I want three numbers. Your code-to-bug ratio with AI vs. purely human. The codebase size where you hit the coherence cliff. Your token spend per feature. Reply to this email. I will compile submissions into a follow-up: what production teams actually see when they deploy gstack at scale. |

|

■ Watch the workflow first

A 30-second visualization of the full sprint

Built with Remotion. Five scenes covering Think, Plan, Build, Review, Test, Ship. Watch this before the breakdown above and the verdict lands sharper. Watch the demo → |

You can ship 600,000 lines and still have a brittle system. You can ship 15,000 lines and own the market.

I built a 12-line CLAUDE.md that beat gstack on cost-per-feature for solo developers across the same workload. The full file, the benchmark methodology, and the one place it loses badly.

|

ResearchAudio.io · one decoded paper or repo per send, for engineers shipping with frontier models.

Sources: github.com/garrytan/gstack · gstack README "On the LOC Controversy" · github.com/garrytan/gstack/issues/949 · dench.com gstack pricing breakdown · 48-hour audit-log reproduction

|