The AI your stack deployed is losing customers.

You shipped it. It works. Tickets are resolving. So why are customers leaving?

Gladly's 2026 Customer Expectations Report uncovered a gap that most CIOs don't see until it's too late: 88% of customers get their issues resolved through AI — but only 22% prefer that company afterward. Resolution without loyalty is just churn on a delay.

The difference isn't the model. It's the architecture. How AI is integrated into the customer journey, what it hands off and when, and whether the system is designed to build relationships or just close tickets.

Download the report to see what consumers actually expect from AI-powered service — and what the data says about the platforms getting it right.

If you're responsible for the infrastructure, you're responsible for the outcome.

|

|

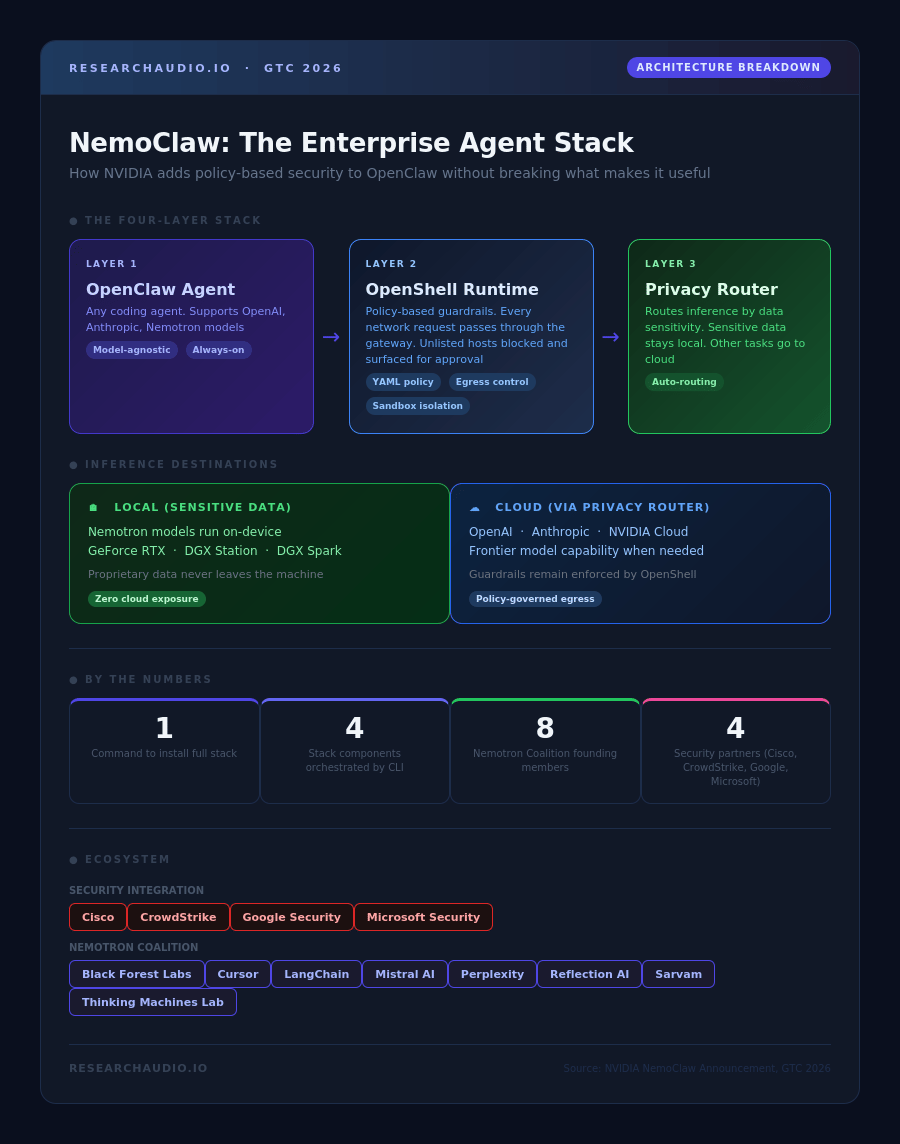

1

Command to install

|

8

Nemotron Coalition founding members

|

4

Security partners (Cisco, CrowdStrike, Google, Microsoft)

|

The Coalition and the Security Stack

Alongside NemoClaw, NVIDIA announced the Nemotron Coalition with eight founding members: Black Forest Labs, Cursor, LangChain, Mistral AI, Perplexity, Reflection AI, Sarvam, and Thinking Machines Lab. The coalition's stated goal is co-developing open frontier models optimized for agentic workloads.

NVIDIA is also working with Cisco, CrowdStrike, Google, and Microsoft Security to bring OpenShell compatibility to their respective security tools. The integration would embed OpenShell's guardrails into the broader enterprise security stack, rather than requiring a parallel system. That partnership structure matters: enterprises already have security tooling, and requiring teams to operate NemoClaw as a separate silo would slow adoption.

|

Key Insight: OpenShell addresses the agent security problem at the infrastructure layer, not the application layer. This is the same architectural logic that made Kubernetes a default: applications do not need to implement their own scheduling; the platform handles it. NemoClaw applies that principle to agent policy enforcement. |

|

Key Insight: The local-plus-cloud inference model means enterprises can classify workloads by data sensitivity. Proprietary contracts and HR data stay on local Nemotron. General tasks go to cloud frontier models. The privacy router handles the routing decision, not the agent, and not the developer. |

|

Key Insight: NemoClaw is currently an early-stage alpha. NVIDIA stated developers should "expect rough edges" and noted the current focus is environment setup, not production-ready deployment. Teams evaluating it for production workloads should treat it as a signal of architectural direction rather than a deployable solution today. |

The open question is whether enterprises will hand their agent infrastructure to NVIDIA as readily as they handed their GPU training jobs to them. OpenShell's YAML policies are a reasonable answer to the security problem. Whether that answer is also a durable competitive position depends on how many of those eight coalition members and four security partners build deep integrations before an alternative emerges.

|

ResearchAudio.io Sources: NVIDIA NemoClaw · NVIDIA Newsroom · GitHub · TechCrunch |