What do these names have in common?

Arnold Schwarzenegger

Codie Sanchez

Scott Galloway

Colin & Samir

Shaan Puri

Jay Shetty

They all run their businesses on beehiiv. Newsletters, websites, digital products, and more. beehiiv is the only platform you need to take your content business to the next level.

🚨Limited time offer: Get 30% off your first 3 months on beehiiv. Just use code JOIN30 at checkout.

|

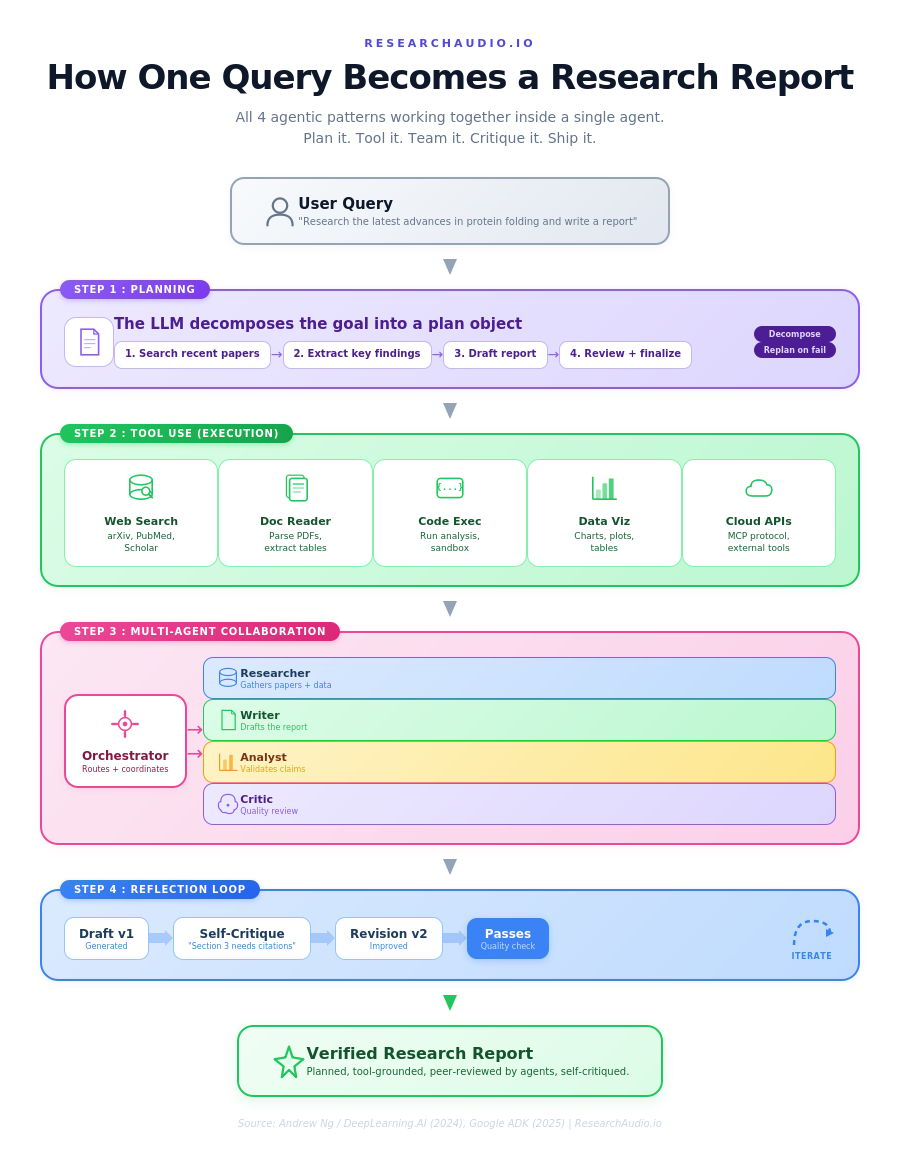

ResearchAudio.io 48.1% to 95.1%. The 4 Agentic Patterns Behind the Jump.Andrew Ng's framework explained simply. How reflection, tools, planning, and multi-agent actually work. |

Here is the number that changed how the entire AI industry thinks about agents.

GPT-3.5, prompted once in zero-shot mode, scored 48.1% on the HumanEval coding benchmark. GPT-4, same setup, scored 67.0%. The obvious conclusion: bigger model, better results. Then Andrew Ng's team wrapped the smaller model in an agentic workflow (plan, write, test, reflect, rewrite) and it jumped to 95.1%. The same GPT-3.5. No fine-tuning. No new weights. Just a different architecture.

That result launched a framework that is now the standard vocabulary for building AI agents. Gartner projects that 40% of enterprise applications will embed AI agents by end of 2026, up from less than 5% in 2025. The agentic market is projected to surge from $7.8 billion today to over $52 billion by 2030. And it all rests on four composable patterns that every engineer should understand cold.

This newsletter breaks each one down. No jargon. Concrete examples. Two diagrams that map the whole system. The goal: after reading this, you can explain agentic patterns to your team, your manager, or your 12-year-old nephew.

|

95.1%

GPT-3.5 with agentic workflow

|

40%

enterprise apps with agents by 2026

|

1,445%

surge in multi-agent inquiries

|

Why Single-Pass Prompting Hits a Wall

Asking an LLM to generate a final answer in one shot is like asking a developer to write production code without ever running it. No tests. No debugging. No code review. The output might be decent, but it cannot self-correct, cannot verify facts against external sources, and cannot decompose a hard problem into manageable pieces.

Agentic workflows fix this by prompting the model multiple times in a loop. The model plans, executes, evaluates, and revises. Each iteration feeds information from the previous step back into the next. This is what separates an "agent" from a chatbot: the ability to correct its own behavior based on intermediate results.

Antonio Gulli, a senior engineer at Google, put it bluntly: most AI production failures between 2024 and 2026 were not model quality failures. They were architecture failures. The model was the brain, but there was no workflow around it to catch errors, access external data, or decompose complex tasks. That is what the four patterns solve.

Pattern 1: Reflection (The Self-Critique Loop)

What it does: The model generates an output, then a second prompt asks it to critique that output, then a third prompt asks it to revise based on the critique. This loop can repeat multiple times. Think of it as writing a first draft, switching hats to become the editor who marks it up with red pen, then switching back to the writer who incorporates the edits.

How it works: You implement this with a single model prompted twice (once as "writer," once as "critic"), or with two separate agents. The critic receives instructions like: "Your role is to critique code. Check it for correctness, style, and efficiency. Give constructive criticism." If you can also run the code in a sandbox and feed error messages back, the loop becomes significantly stronger. GitHub Copilot already does this internally: generate, review, evaluate, refine.

Why it matters: This is the simplest pattern to implement, and Ng says it often provides a "surprisingly nice bump." Without reflection, hallucinations go unchecked and silent errors propagate. With reflection, the system converges toward consistency. As one practitioner put it: "An agentic Descartes would say, I reflect, therefore I am."

|

Build it yourself: Two LLM calls and a feedback prompt. That is all you need. Call 1: "Write code for X." Call 2: "Critique this code for correctness, style, efficiency." Then feed the critique back to Call 1. Repeat 2-3 times. You now have a reflection agent. |

Pattern 2: Tool Use (Giving the Model Hands)

What it does: The LLM can call external tools: web search, code execution, database queries, APIs, calendars, email. This breaks the model out of its training data and lets it interact with the real world. It is the difference between a brain in a jar and a person who can pick up a phone.

How it works: The LLM receives a query, determines if external data is needed, activates the appropriate tool (search, calculator, API), receives the result, and combines it with its reasoning to produce a response. Protocols like the Model Context Protocol (MCP) now standardize how tools connect to LLMs, the same way USB standardized how peripherals connect to computers. Ask "Who won Euro 2024?" and the model knows it needs a search tool. It fires the query, gets "Spain won 2-1 against England," and incorporates that into its answer.

Why it matters: Without tool use, the model is limited to what it memorized during training. It cannot look up a stock price, run a SQL query, or send an email. With tool use, the agent moves from generating text to performing actions. This is the pattern that turns a chatbot into an assistant that actually does things.

Pattern 3: Planning (Thinking Before Doing)

What it does: The LLM breaks a complex goal into a sequence of sub-tasks, executes them step by step, and can replan if a step fails. Think of the difference between giving a contractor a vague instruction ("renovate my kitchen") versus giving them blueprints with milestones, dependencies, and checkpoints.

How it works: The model first generates an explicit plan object: a list of steps with descriptions and expected outputs. Then it executes each step, often using tool calls along the way. If a step fails or produces unexpected results, the model revises the plan and routes around the failure. This is the pattern behind research agents that gather information, analyze findings, and produce comprehensive reports autonomously.

Why it matters: Planning reduces what one practitioner calls "cognitive entropy," the tendency for an agent to wander aimlessly through a complex task. Without a plan, the model might start coding before understanding requirements, or search for data before knowing what questions to answer. The rule of thumb: no long-running agent should operate without an explicit plan object.

|

The resilience test: A good planning agent handles failure gracefully. If the web search returns nothing useful, the agent replans: try a different query, switch to a database lookup, or ask the user for clarification. This ability to route around failures is what separates a production agent from a demo that works on three examples. |

Pattern 4: Multi-Agent Collaboration (The AI Team)

What it does: Multiple specialized agents work together, splitting tasks and debating ideas, to produce better solutions than any single agent could. Imagine hiring a team instead of one generalist: a researcher gathers papers, a coder implements solutions, an analyst validates the data, and a critic reviews the final output.

How it works: Each "agent" is simply an LLM prompted with a specific persona and instructions. A supervisor (orchestrator) routes tasks to domain-specific sub-agents. Google's Agent Development Kit implements three primitives: SequentialAgent (pipeline, like a data processing chain), ParallelAgent (concurrent, like three reviewers checking a pull request simultaneously), and CoordinatorAgent (LLM-driven delegation based on agent descriptions). Critical detail: when running agents in parallel, each must write to a unique state key to prevent race conditions on shared state.

Why it matters: A single agent tasked with too many responsibilities degrades in quality as instruction complexity grows, the same way monolithic software does. The agentic world is going through its own microservices moment. Gartner reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025. The era of the single general-purpose model is ending. The future is fleets of specialized agents communicating through shared protocols.

How the Patterns Fit Together

These four patterns are not isolated. They compose naturally, like LEGO bricks. A research agent might use Planning to decompose a research question into sub-tasks, Tool Use for web search and document retrieval, Multi-Agent to have a separate writer and analyst work in parallel, and Reflection to critique and improve the draft report before delivery.

Frameworks like LangGraph, AutoGen, CrewAI, and Google ADK implement these patterns explicitly. But here is Ng's sharpest observation from his course, and the one most engineers overlook: the single biggest predictor of whether a team executes well is not which pattern they choose. It is their ability to drive a disciplined process for evals and error analysis. Build the agent. Measure where it fails. Fix that specific component. Repeat. Let the data guide you, instead of guessing.

|

The bottom line: Roughly 95% of AI projects fail to deliver value, according to MIT's Project NANDA. The failures are architecture problems, not model problems. These four patterns are the structured fix: self-correction through Reflection, real-world grounding through Tool Use, task decomposition through Planning, and specialization through Multi-Agent Collaboration. Start with the simplest pattern that addresses your core failure mode. Layer more when the data tells you to. Frameworks change. These patterns persist. |

The gap between 48.1% and 95.1% was not closed by a bigger model. It was closed by giving a smaller model the ability to plan, act, check its work, and try again. That is the core lesson. The architecture around the model matters more than the model itself.

|

ResearchAudio.io Sources: Andrew Ng / The Batch · DeepLearning.AI Agentic AI Course · Google ADK Multi-Agent Patterns · ML Mastery Trends 2026 |